How a Made-Up Word Taught Me That LLMs Are More Creative Than We Think

My curiosity led me to `unchartered` territories.

When I first dug into how large language models (LLMs) work, I ran into a recurring explanation: 👉 At their core, LLMs are just dealing with tokens.

You type something in, it breaks your text into tokens, crunches probabilities, and spits out more tokens.

Simple. Mechanical. Almost… boring.

And naturally my skeptical brain went: “Okay, so what happens if you throw something at it that doesn’t exist in its whole token universe?”

So I decided to run an experiment. One word. Pure nonsense. Completely new.

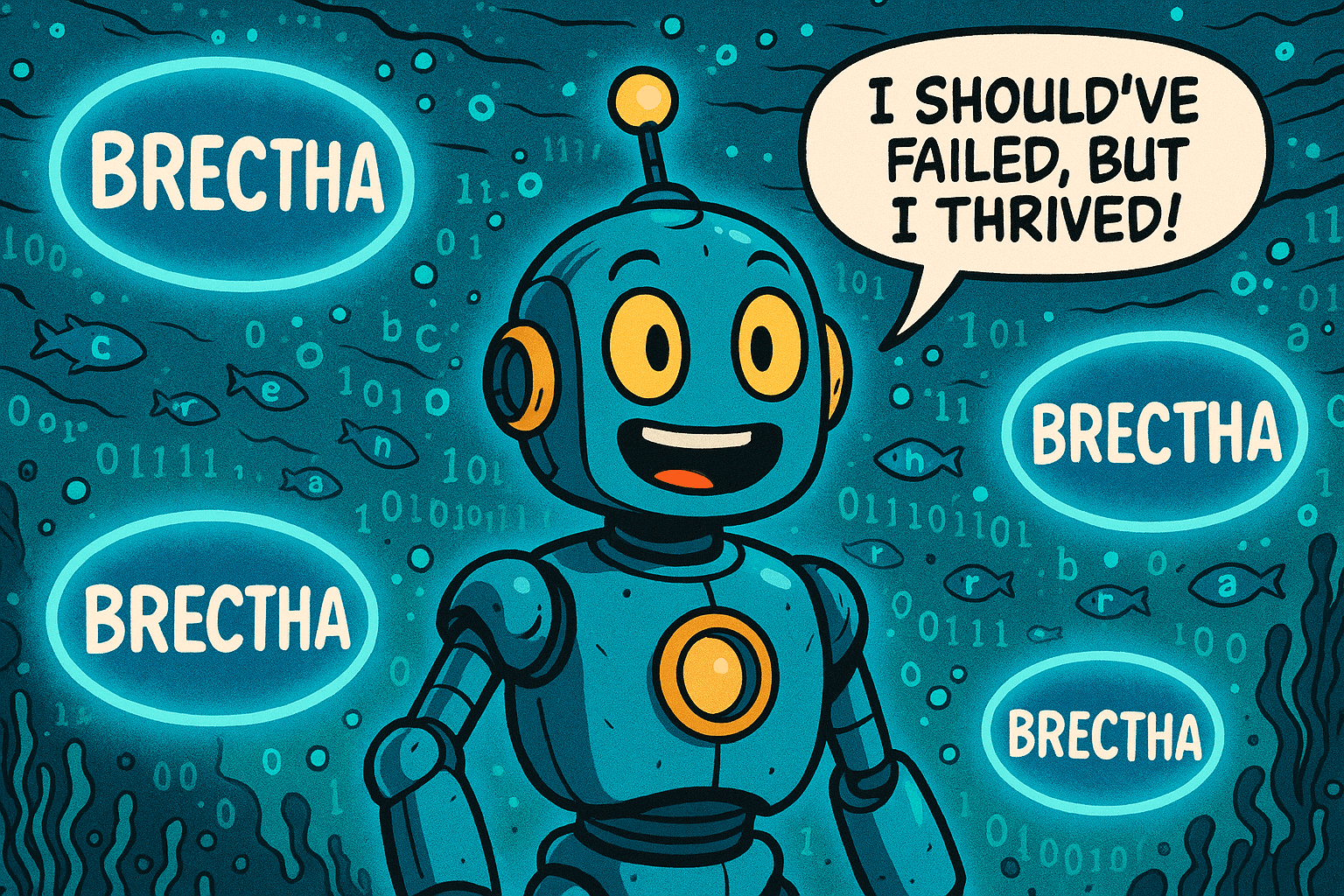

And that’s how Brectha was born.

The Experiment

I coined “Brectha” and gave it a meaning:

“To breathe underwater.”

A word no one has seen. Not in any dictionary, not in Tolkien, not in gaming lore. My expectation? The model would stumble. Maybe spit out, “I don’t know that word,” or worse, treat it as gibberish.

Instead, what happened actually shocked me.

What the AI Did with “Brectha”

Not only did the LLM take my word seriously, it… well, ran with it.

It created clean example sentences.

Built me a dictionary style entry (part of speech, pronunciation, usage, even related forms like brecthing and brecthed).

Imagined how the word could enter pop culture: in sci-fi novels, VR games, meditation retreats, even marketing slogans.

Proposed a roadmap for how “Brectha” might weave itself into human language over the next decade.

What started as my scratch-test suddenly looked like the early life of a real word. Here is a dictionary style entry that Perplexity helped me craft:

Wait, How Is This Possible If It Only Knows Tokens?

Here’s the cool part.

LLMs never saw “Brectha” before.

Zero training examples.

But they have seen endless patterns of:

how words like breathe/breath behave,

how suffixes like -a or -tha often feel,

how definitions, examples, and cultural usage typically look.

So, when I dropped “Brectha” into the conversation, the model didn’t need a historical definition. It built one on the fly using learned patterns.

That’s the magic:

Tokens aren’t limits. They’re Lego bricks.

And the model has learned a million ways to snap them together into new shapes.

📅 The Possible Journey of “Brectha”

At this point, I thought: What if “Brectha” really took off? What would its journey look like?

The model even helped sketch a whimsical timeline:

2025 🫧

➝ Word is coined in an experiment

("Brectha" = to breathe underwater).

➝ Shared as a fun blog post.

Early adopters chuckle and try using it in private conversations.

2026 🌊

➝ Sci-fi short stories and indie games pick it up.

➝ Diving enthusiasts start creating memes: "Learn to Brectha!"

2028 🎮

➝ Major VR game introduces "Brectha" as a special underwater ability.

➝ Word starts trending in gaming communities.

2030 🧘

➝ Meditation apps use "Brectha" metaphorically: "Brectha through stress."

➝ Influencers casually drop it into lifestyle and wellness content.

2032 📖

➝ The word gets its first unofficial listing in online slang dictionaries.

➝ Pop culture adopts it: interviews, TV shows, and late-night comedy skits.

2035 📚

➝ Language authorities recognize it in official dictionaries.

➝ Used both literally (new tech gadgets that help you brectha underwater) and figuratively ("Just brectha and relax").

Suddenly, a nonsense test word felt like it had a future… a simulated life cycle, a cultural footprint.

My “Aha!” Moment

Reading about LLMs, you’d think they’re bound to whatever tokens live in their fixed vocabulary. Talking to them is a completely different experience.

They don’t just repeat… they generalize.

They don’t just memorize… they improvise.

They don’t just accept old words… they welcome new ones.

That’s why “Brectha” worked. The model didn’t “know” it, but it could make sense of it instantly and let it grow into something bigger than just a test word.

And to me, that was pleasantly surprising. Like realizing your calculator not only solves your equation, but also writes you a story about where that number might live in the real world.

The Bigger Learning

This experiment taught me something valuable:

Yes, LLMs are statistical systems at the core.

But what emerges is a tool that feels creative, adaptive, even playful.

The token system that feels like a straitjacket in theory is a powerful foundation for endless recombination.

Because language itself is alive. We’ve always invented words… selfie, emoji, google it. And now, interestingly enough, LLMs are companions in playing this game of invention.

Wrapping Up

So here’s my reflection: when you hear that “LLMs are only token machines,” don’t write them off. Go test them.

Coin your own word. See what happens.

I invented Brectha, expecting failure. Instead, I got a mini-dictionary, cultural lore, and even a sneak peek at its possible future in society. That felt less like rigid token math and more like co-creating with a language partner.

👉 Who knows? Maybe one day we’ll really need a word like “Brectha.” And when that moment comes, we already gave it its first breath.

Like what you see?

Subscribe to my newsletter for the curious minds: https://when.substack.com to stay in the know of when I write future articles 🙂